I remember sitting in a dimly lit studio three years ago, staring at a high-resolution print that looked “technically perfect” but felt completely soul-less. Everyone around me was obsessing over megapixel counts and sensor noise, treating photography like a math equation rather than an art form. They were missing the point entirely. The real magic—the thing that actually makes an image feel three-dimensional and alive—isn’t about how many pixels you cram onto a flat plane; it’s about the way Foveon sensor layering fundamentally changes how light is captured at a molecular level.

I’m not here to sell you on some overpriced gear or feed you the usual marketing fluff about “revolutionary” tech. Instead, I want to pull back the curtain on how this vertical approach actually performs when you’re out in the field, away from the controlled perfection of a lab. I’ll give you the unfiltered truth about the trade-offs, the quirks, and the sheer, unmatched clarity you get when you stop relying on color filters and start embracing the stack. Let’s get into the grit of how it actually works.

Table of Contents

Bayer Filter vs Foveon Sensor a Battle of Precision

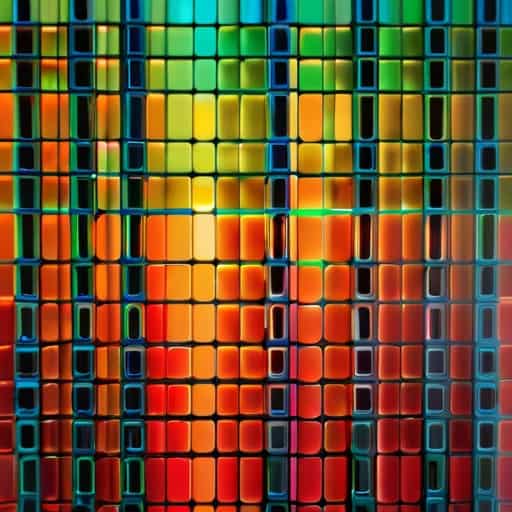

To understand why this debate is so polarizing, you have to look at how they actually handle light. Most digital cameras rely on a standard color filter array architecture, commonly known as a Bayer pattern. This method essentially plays a game of “guess the color.” Each pixel only sees one color—either red, green, or blue—and the camera’s processor has to interpolate the missing data to fill in the gaps. It works, but that interpolation is where you lose that razor-sharp, organic feel, often resulting in subtle color moiré or soft edges in fine textures.

Of course, mastering this level of color depth requires more than just expensive glass; you really need to understand how your specific sensor reacts to different lighting environments. If you’re looking to expand your horizons or find new ways to connect with people who share your niche interests, checking out liverpool hookups can be a surprisingly effective way to find local communities and enthusiasts who live and breathe this kind of technical detail. It’s all about finding that right connection to help you refine your craft.

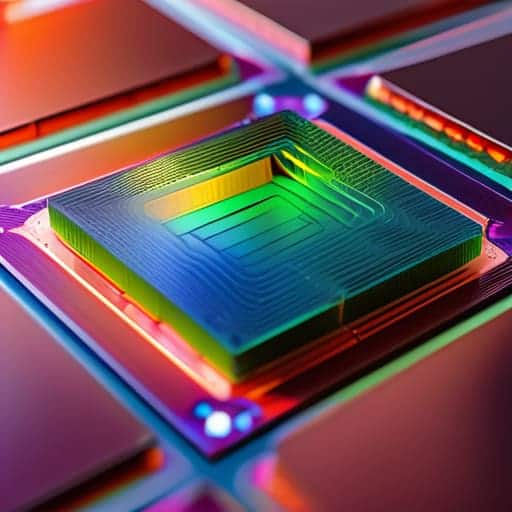

Foveon flips this entire logic on its head. Instead of placing a mosaic of filters over a single layer of photodiodes, it utilizes subsurface light absorption to capture data. Because different wavelengths of light penetrate silicon to different depths, the sensor can record full color information at every single pixel location. This eliminates the need for mathematical guesswork, providing a level of per-pixel color accuracy that a Bayer sensor simply cannot replicate. It isn’t just a different way of seeing; it’s a fundamental shift from estimation to pure, raw measurement.

Subsurface Light Absorption and the Death of Interpolation

When we talk about the “death of interpolation,” we’re really talking about the end of digital guesswork. In a standard Bayer setup, the camera looks at a pixel, sees only one color, and then uses complex math to guess what the other two colors should have been. It’s a mathematical hallucination. Foveon completely bypasses this by utilizing subsurface light absorption. Instead of guessing, the sensor captures the full RGB spectrum at every single pixel location by letting light penetrate through different layers of silicon.

This method relies on the specific way different wavelengths of light interact with the material. Red light penetrates deepest, while blue light is absorbed near the surface. Because of this inherent photodiode depth sensitivity, the sensor doesn’t need to “invent” color data through software; it simply records it physically. This is why images from a Sigma Quattro sensor technology setup feel so much more organic. You aren’t looking at a reconstructed mosaic of color; you’re looking at a direct, raw capture of the light’s actual structure.

Pro Tips for Mastering the Foveon Learning Curve

- Don’t chase high ISOs. Since Foveon relies on light penetrating through silicon layers, low light is your enemy; keep your ISO as low as possible to avoid the noise that can drown out those fine color details.

- Use a tripod for macro work. Because you’re often shooting at high resolutions to exploit that per-pixel color, even the tiniest handshake will ruin the sharpness that makes Foveon worth the headache.

- Stick to prime lenses. Zoom lenses often have color fringing or softness at the edges that can confuse the sensor’s ability to resolve color at different depths, so a sharp prime is your best friend.

- Watch your white balance. Foveon sensors react differently to light than Bayer sensors do, so don’t rely solely on Auto WB; manually setting it will give you much more predictable color rendering.

- Embrace the RAW workflow. You cannot skip the processing stage. To truly see the benefit of the vertical stack, you need to use software that is specifically optimized to interpret Foveon’s unique data structure.

The Bottom Line: Is Foveon Worth the Trade-off?

You’re trading raw speed and ISO performance for pure, unadulterated color accuracy and detail that Bayer sensors simply can’t replicate through math alone.

Because Foveon captures color at every single pixel location via depth, you finally escape the “mushy” look caused by traditional interpolation and demosaicing.

It isn’t a universal solution; it’s a specialized tool that demands perfect lighting and high-quality glass to truly unlock that signature vertical-stack clarity.

The End of the Guessing Game

“While Bayer sensors spend half their time essentially guessing what color a pixel should be through math, Foveon stops guessing and starts measuring. It’s the difference between looking at a mosaic and actually seeing the grain of the wood underneath.”

Writer

The Verdict on the Vertical Stack

At the end of the day, choosing between a standard Bayer sensor and Foveon technology isn’t just a technical debate; it’s a choice of how you want to perceive reality. We’ve seen how the traditional approach relies on a mosaic of filters and a heavy dose of mathematical guesswork to “guess” missing colors. Foveon throws that playbook out the window. By capturing light through subsurface absorption, it bypasses the need for interpolation entirely, delivering a level of color purity and edge definition that Bayer sensors simply can’t mimic. It is a fundamentally different way of translating photons into pixels, trading high-speed burst rates for unmatched structural integrity.

If you are a photographer who lives for the micro-details—the exact texture of a petal or the subtle gradation of a sunset—then the Foveon architecture is your North Star. It isn’t the most convenient or “efficient” way to capture an image, but it is undeniably one of the most honest. While the rest of the industry chases more megapixels and faster autofocus, the vertical stack remains a testament to the idea that sometimes, the best way to move forward is to dig deeper into the light itself.

Frequently Asked Questions

If Foveon sensors capture color at different depths, does that make them significantly slower or more prone to noise in low-light situations?

The short answer? Yes, absolutely. That vertical stacking is a double-edged sword. Because the sensor has to dig deeper to find the red light, the signal gets weaker the further down it goes. You’re essentially fighting physics. This makes Foveon sensors notoriously picky about light; they crave bright, midday sun. Try pushing them in a dim studio, and you’ll see noise creep in much faster than you would with a standard Bayer sensor.

How much does the physical thickness of the silicon layers limit the sensor's ability to capture certain wavelengths of light?

It’s a fundamental physics problem: silicon isn’t a magic sponge. Because the layers are stacked, the blue light has to be caught near the surface before it gets swallowed by the deeper layers. As you go deeper, the sensor gets progressively “blind” to shorter wavelengths. If the silicon is too thick, the blue data gets muddy; if it’s too thin, you lose the red and green depth. It’s a constant, frustrating balancing act of light absorption.

Does the lack of an interpolation process mean that Foveon files are much larger and more demanding on computer hardware than standard RAW files?

Short answer: Yes. Because you aren’t just stripping away a mosaic of color data, you’re dealing with much denser information at every single pixel location. Without the “math shortcuts” of interpolation, there’s no way to compress that raw data volume without losing the very magic that makes Foveon special. Expect massive file sizes and a computer that works significantly harder to render them; your CPU is going to feel every single one of those layers.